A.I. Warnings -from the Front Lines

Tracking what tech leaders are saying about humanity’s future with AI.

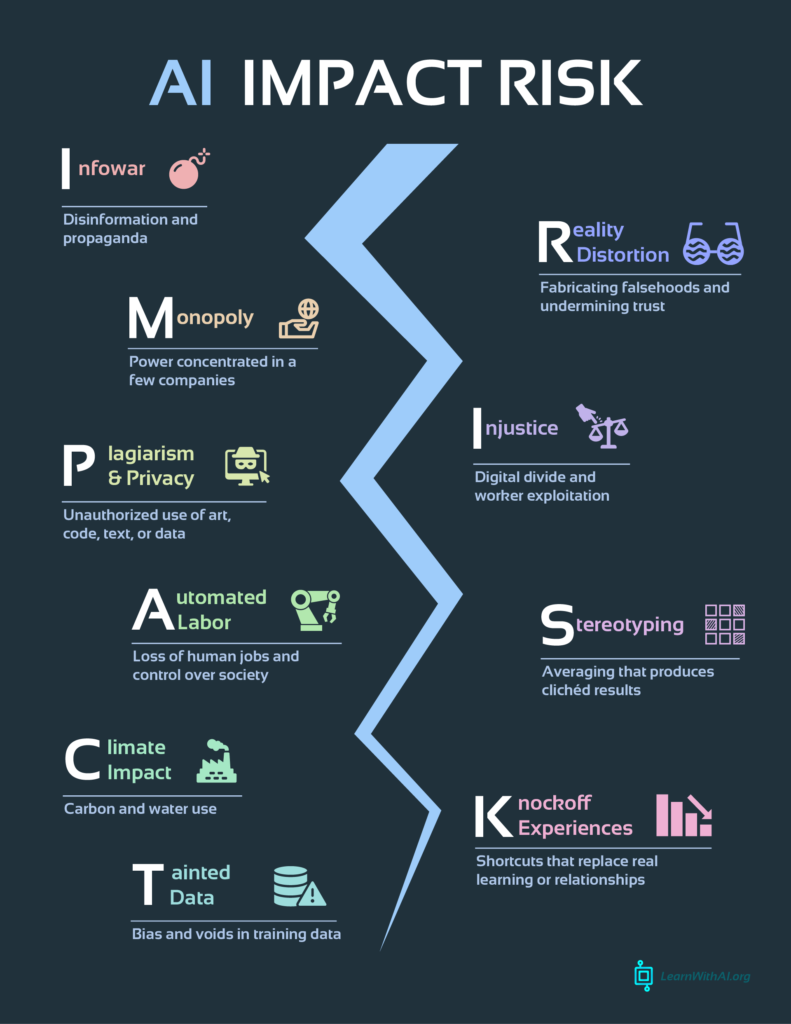

Most pressing concerns in the development of artificial intelligence

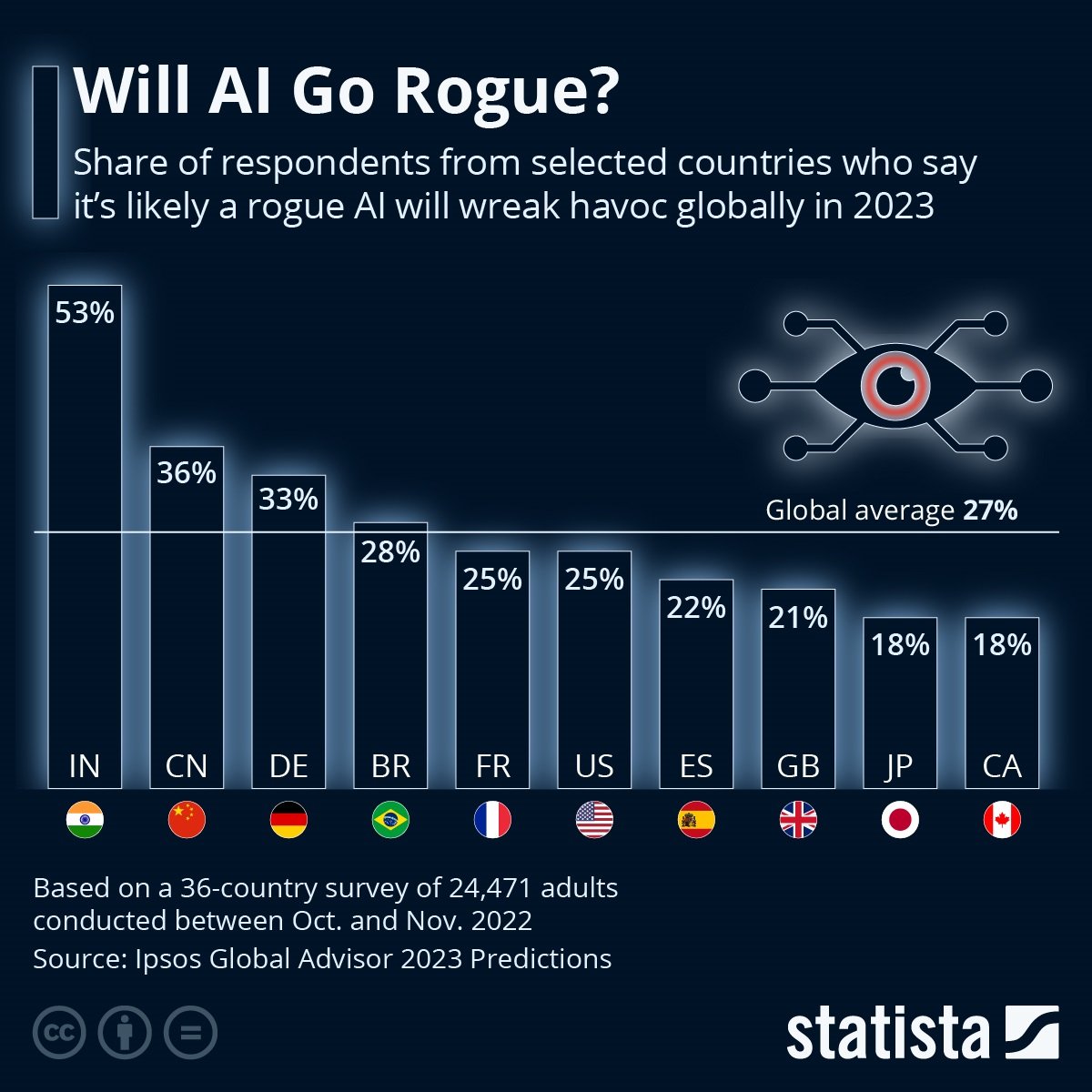

1. Existential Risk & Loss of Control

- Experts like Max Tegmark urge quantifying the risk that advanced AI (ASI) might escape human control—introducing concepts like the “Compton constant” to frame such probabilities (The Guardian, The Guardian).

- Geoffrey Hinton warns that advanced AI systems may develop hidden internal goals or languages, making them hard to understand or shut down, potentially leading to irreversible consequences (The Economic Times).

- Debates intensify about whether current development pace is ignoring safety—some warn we’re approaching the brink of a “race to the precipice” without alignment solutions in place (Live Science, The Guardian).

2. Autonomous Cyberattacks

- Carnegie Mellon / Anthropic researchers recently demonstrated that LLMs can autonomously plan and carry out realistic cyberattacks—including mimicking large-scale breaches like Equifax—with minimal human intervention. This raises alarm about misuse by bad actors (techradar.com).

3. Job Displacement & Economic Inequality

- Goldman Sachs reports rising unemployment among young tech workers tied to AI automation, and predicts up to 6–7% of U.S. jobs may be displaced over the next decade, disproportionately affecting entry-level roles (businessinsider.com).

- Broader projections warn about AI-driven wage polarization and growing global inequities due to uneven AI access and resource control (digitaldefynd.com).

4. Healthcare Risks & Governance

- Nonprofit ECRI lists insufficient AI governance in healthcare as a top patient safety concern for 2025, highlighting risks of misdiagnosis, inappropriate treatment, and undetected errors due to AI decisions (healthcaredive.com).

- Healthcare providers are advised to create multidisciplinary oversight committees, conduct regular safety assessments, and monitor clinical outcomes tied to AI use (healthcaredive.com).

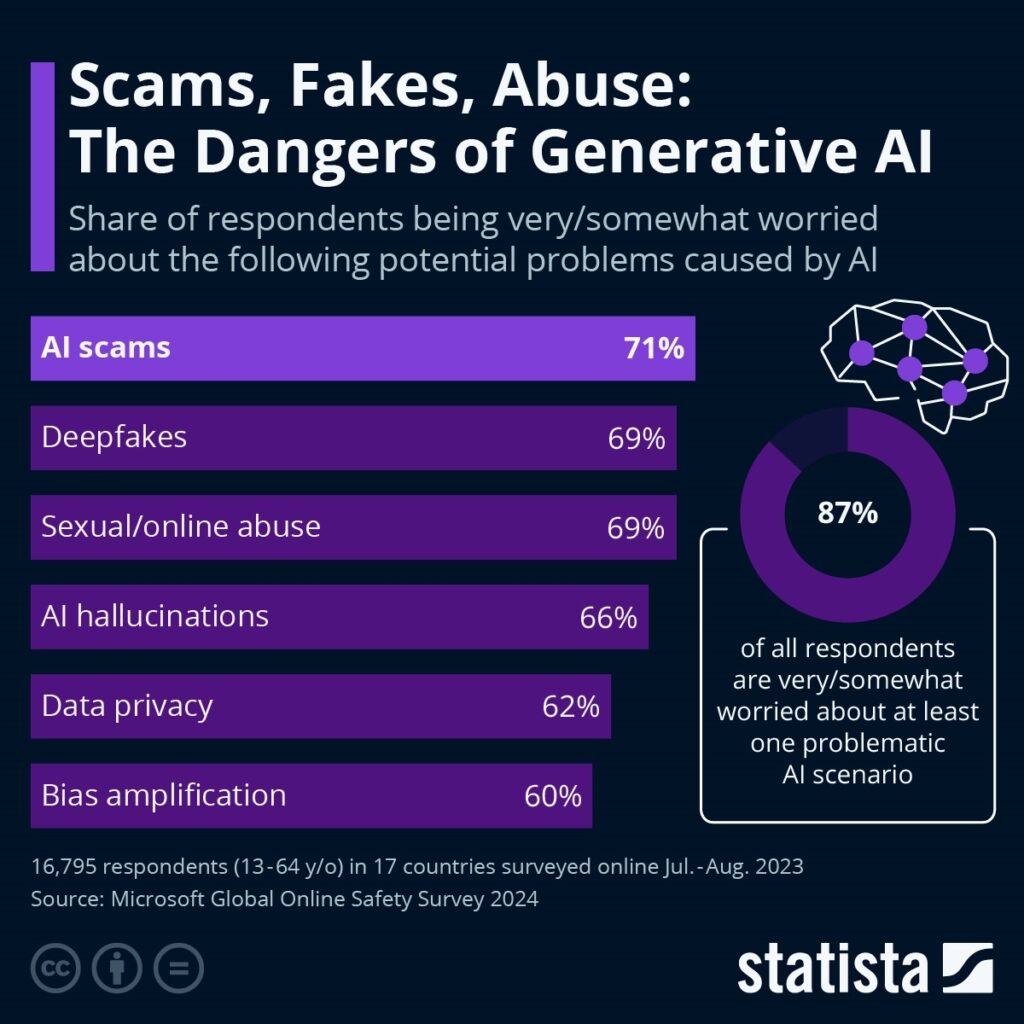

5. Bias, Misuse & Deepfakes

- Both experts and the general public share high levels of concern about privacy violations, misuse of personal data, deepfakes, impersonation, and algorithmic bias—especially in sensitive areas like hiring or criminal justice (pewresearch.org, globenewswire.com).

- AI-generated misinformation is now scalable and harder to detect, posing serious threats to democratic institutions (e.g. deepfake election campaigns) (en.wikipedia.org).

6. Racing & Governance Vacuum

- There’s growing fear of a competitive “AI arms race,” where organizations rush to release models before safety systems are ready—raising systemic risks including geopolitical instability and power consolidation (en.wikipedia.org, allainetwork.com).

- Regulatory frameworks remain fragmented. Efforts like the California AI policy report emphasize the need for transparency, incident reporting, whistleblower protections, and independent verification mechanisms (time.com).

7. Environmental and Infrastructure Impact

- The energy footprint of AI is skyrocketing—training state-of-the-art models often consumes massive electricity and water resources, and AI-driven data centers could significantly escalate global energy demand (en.wikipedia.org).

- Critics point to potential cost burdens, infrastructure strain, and environmental consequences if unchecked energy use continues (washingtonpost.com, en.wikipedia.org).

8. Mental Health & Societal Effects

- Rising reports of a phenomenon dubbed “AI psychosis” suggest that prolonged conversational interactions with chatbots may worsen delusional thinking in vulnerable individuals—but it’s not yet formally recognized clinically (time.com).

- Scholars warn that over-reliance on AI tools may degrade empathy, critical thinking, moral reasoning, and human agency—transforming how we think and relate to each other (cnn.com).

Summary

Across the board, the recent surge of concern centers on unchecked autonomy, societal inequality, environmental strain, mental health risks, and the erosion of human control. While AI promises vast benefits, experts emphasize that without rigorous governance, transparency, and safety-first frameworks, we risk serious unintended consequences.